The Neuro-Computational Frontier: Visual Reconstruction, Neural Prosthetics, and the Jurisprudence of the Mind in 2026

The convergence of high-field functional Magnetic Resonance Imaging (fMRI), generative artificial intelligence (GAI), and invasive neural interfaces has fundamentally altered the landscape of cognitive neuroscience and forensic science by 2026. This paradigm shift centers on the ability to decode the human brain’s internal states and translate them into externalized, high-fidelity visual media. At the heart of this evolution is the transition from simple linear mapping to sophisticated, brain-inspired architectures such as the Brain Interaction Transformer (BIT) and the wide-scale deployment of visual prosthetics like Neuralink’s Blindsight. As these technologies move from the laboratory to the courtroom and the clinic, they necessitate a rigorous evaluation of their technical foundations, their inherent limitations—referred to as the "interface problem"—and the emerging legal frameworks designed to govern the privacy of human thought.

The Evolution of Visual Perception Reconstruction

The pursuit of reconstructing visual experiences from human brain activity has moved through several distinct epochs. Early attempts in the first decade of the 21st century relied on mapping fMRI signals to handcrafted image features, such as Gabor filters, to identify which categories of objects a subject was viewing.1 However, these methods were constrained by the low spatial and temporal resolution of fMRI and the lack of powerful generative models capable of synthesizing complex scenes. By 2026, the integration of Latent Diffusion Models (LDM) and Transformer-based encoders has enabled the reconstruction of seen images with remarkable accuracy, capturing not only the semantic category of the stimuli but also their fine-grained structural and spatial characteristics.2

Mechanistic Foundations of fMRI-to-Image Synthesis

Modern reconstruction pipelines typically function by decoding fMRI patterns into a latent space that is compatible with pre-trained visual-linguistic models, such as CLIP (Contrastive Language-Image Pre-training). The fMRI signal, which measures blood-oxygen-level-dependent (BOLD) responses as a proxy for neural activity, is inherently noisy and lags behind actual neural firing by several seconds.4 To overcome this, researchers have developed models like NeuroFusionNet, which utilizes 1D convolutional layers to extract spatial features from Region-of-Interest (ROI) voxels while preserving the brain’s temporal response through a Multi-scale fMRI Timeformer.5

This Timeformer module is critical for capturing the hierarchical nature of visual processing. The primary visual cortex (V1) processes low-level structural features like edges and orientations, while higher-order areas such as the lateral occipital complex (LOC) and the fusiform face area (FFA) process semantic concepts and object identities.6 By processing signals at different scales, the model can synthesize a coherent image that aligns with both the "pixel-level" structural data and the "concept-level" semantic data stored in the BOLD signal.5

Reconstruction Component | Technical Function | Underlying AI Architecture |

Semantic Branch | Steering images toward correct content categories | CLIP / Stable Diffusion 2 |

Structural Branch | Initializing image layout and spatial positioning | Deep Image Prior (DIP) / VGG 1 |

Voxel Embedding | Mapping 3D brain activity to 1D latent vectors | 1D-Convolution / Fully Connected Layers 5 |

Temporal Encoder | Capturing the evolution of thought over time | Multi-scale Transformer (Timeformer) 5 |

The efficacy of these models is further enhanced by "SynBrain," a generative framework that simulates the transformation from visual semantics to neural responses in a probabilistic manner.7 By using a BrainVAE (Variational Autoencoder), SynBrain models neural representations as continuous probability distributions rather than fixed points, which allows the system to generate high-quality synthetic fMRI signals. This is particularly valuable in data-scarce scenarios, where a researcher might only have one hour of data from a new subject instead of the standard forty hours required by previous state-of-the-art methods.1

The Brain-IT Model and the Interaction Transformer

A defining technical achievement of 2026 is the "Brain-IT" model, which introduces the Brain Interaction Transformer (BIT). Unlike traditional models that treat each fMRI scan as a single, monolithic entity, BIT is designed around the biological principle that neural processing is distributed across specialized, interacting brain regions.2

Technical Architecture of BIT

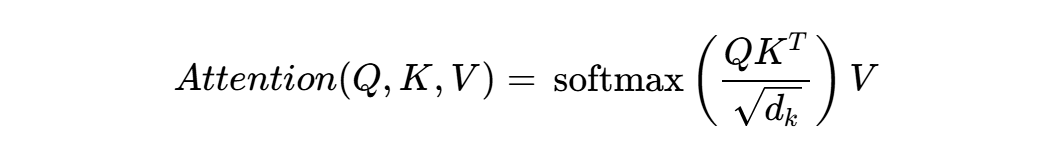

The BIT architecture functions by clustering functionally similar brain-voxels into discrete units. Each cluster is summarized by a "Brain Token," a vector representation that captures the core activity of that functional group.2 These tokens are shared across different subjects, which allows the model to leverage universal patterns of human brain organization. The core of the BIT model is the interaction between these tokens, which is modeled using a standard attention mechanism:

In this context,  (Query),

(Query),  (Key), and

(Key), and  (Value) are derived from the Brain Tokens. This allows the model to "attend" to specific clusters—for instance, linking the activity in the color-processing regions (V4) with the object-recognition regions (IT) to ensure the reconstructed image has the correct hues and shapes.1

(Value) are derived from the Brain Tokens. This allows the model to "attend" to specific clusters—for instance, linking the activity in the color-processing regions (V4) with the object-recognition regions (IT) to ensure the reconstructed image has the correct hues and shapes.1

Dual-Stream Reconstruction

The Brain-IT pipeline utilizes a dual-stream framework to balance generative realism with anatomical faithfulness. The high-level semantic branch predicts image features that guide a diffusion model (such as Stable Diffusion XL) toward the correct semantic content.2 Simultaneously, the low-level structural branch uses predicted VGG features to initialize the diffusion process with a coarse layout. This ensures that the global structure is established by the direct neural evidence before the diffusion process refines the image under semantic conditioning.1

This approach addresses a significant performance gap in earlier fMRI-to-image methods: the "hallucination" problem. Generative priors are often so powerful that they can produce a realistic image of a "dog" based on very weak neural signals, even if the subject was actually looking at a "cat." By constraining the generative process with a dedicated structural branch, Brain-IT achieves reconstructions that are more faithful to the actual seen stimuli.2

Reconstructing Internal Imagery: Memory, Thought, and Dreams

Beyond the reconstruction of immediate perception, 2026 has seen breakthroughs in decoding "internal" imagery—visual content that is generated by the brain in the absence of external sensory input. This includes recalled memories, mental visualization, and the vivid imagery of dreams.6

The DreamConnect System

A significant milestone in this domain is "DreamConnect," a brain-to-image system that integrates fMRI with advanced diffusion models to not only reconstruct but also modify mental imagery using natural language instructions.10 Developed by an international team, DreamConnect uses a dual-stream diffusion framework where the first stream interprets brain signals into rough visuals and the second stream refines them based on text commands. For example, if a participant is imagining a house, they can provide the instruction to "make the house modern," and the system will update the relevant visual features in the reconstructed image accordingly.10

This technology relies on the fact that mental imagery and visual perception share hierarchical neural representations.6 When a person imagines an object, the brain activates many of the same high-level visual areas used during actual sight. However, the activity in early visual areas (V1-V3) is typically weaker during imagery than during perception, which historically made mental imagery harder to decode. Modern systems overcome this by capitalizing on multiple levels of visual cortical representations, optimizing images so their DNN features match the decoded hierarchical features across the entire visual cortex.6

Decoding the Subconscious

The reconstruction of dreams represents the most complex application of this technology. By capturing BOLD signals during the Rapid Eye Movement (REM) phase of sleep—where brain activity most closely mimics wakefulness—researchers at the ATR Computational Neuroscience Laboratory have been able to reconstruct symbolic or conceptual images of dream content.4 While these reconstructions are often "fuzzy" or symbolic rather than photographic, they provide a reliable index of the categories and narratives present in the subconscious.4

The 2026 iteration of dream decoding involves weaving these reconstructed images into coherent video narratives using Large Language Models (LLMs) to interpret the visual sequences.12 This "brave new idea" in the multimedia community aims to transform fleeting subconscious experiences into permanent, shareable media elements, bridging the gap between subjective experience and objective data.12

Current Limitations and the "Interface" Problem

Despite the remarkable progress in 2026, the field of neuro-reconstruction is still hampered by the "interface problem"—the set of technical and biological constraints that prevent a perfect 1-to-1 mapping between brain activity and digital media.2

Signal-to-Noise Ratio (SNR) and Hemodynamic Lag

The primary biological limitation is the nature of the fMRI signal itself. BOLD signals are indirect measures of neural activity, reflecting changes in blood oxygenation that take several seconds to peak after a neuron has fired. This "hemodynamic lag" makes it difficult to reconstruct fast-moving visual sequences or real-time thoughts.4 Furthermore, fMRI voxels—the 3D pixels used in brain scans—typically represent the average activity of millions of neurons. This lack of granularity means that much of the "fine code" of the brain is lost in translation.14

Non-Stationarity and Individual Variability

Brain signals are non-stationary, meaning the patterns of activity for a specific thought can change over time due to fatigue, stress, or neuroplasticity.13 Additionally, the way a "tree" is represented in one person's brain is not exactly the same as in another's. While Brain-IT addresses this through shared cluster tokens, the "alignment" process remains computationally intensive and prone to error when transferring models between subjects.1

Epistemic Lock-in and AI Reliability

A new limitation identified in 2026 is the "AI 2026 problem," an epistemic risk where structural error patterns in the AI models used for decoding become embedded in the historical or forensic record.15 If a model consistently misinterprets a specific neural pattern, and that interpretation is used to build further models or to provide evidence in court, it creates a "False-Correction Loop" (FCL). This can lead to a state where the AI’s "story" of what the brain is thinking overrides the biological reality, a phenomenon known as "epistemic lock-in".15

Neuralink and the Blindsight Revolution

While fMRI-based systems are non-invasive and useful for research, they are too bulky for daily use. Neuralink has addressed the "interface" problem from the opposite direction by developing "Blindsight," an invasive brain-computer interface (BCI) designed to restore visual perception to the blind.16

Technical Progress and March 2026 Status

As of March 2026, Neuralink has transitioned into high-volume production of its N1 brain chips and is preparing for expanded human clinical trials.18 The Blindsight device functions by bypassing the eyes and optic nerve entirely, transmitting data from a wearable camera directly to an array of electrodes implanted in the visual cortex.17

The system’s current capabilities and 2026 milestones include:

- Breakthrough Device Designation: Received from the FDA in September 2024, accelerating the regulatory path for vision restoration in patients with complete blindness.17

- Surgical Automation: The R1 robot now performs "through-dura" insertion, where the 1,024 electrodes are placed without removing the brain's protective membrane, reducing surgical risk.17

- High-Volume Production: Elon Musk announced on January 1, 2026, that the company is scaling to industrial levels, with the goal of moving BCI technology from an "expensive experiment" to a "viable treatment option" for millions.18

Device Parameter | Neuralink Blindsight (2026) | Conventional Visual Prosthetics |

Electrode Count | 1,024 (distributed across 64 threads) | 60 - 100 (typically rigid arrays) 17 |

Implantation Method | Automated robot, through-dura | Manual neurosurgery, requires craniotomy 18 |

Target Population | Complete blindness (no eyes/optic nerve) | Partial blindness (macular degeneration) 20 |

Power/Data | Wireless inductive charging / WiFi | Transcutaneous wires / Percutaneous links 23 |

While the initial resolution of Blindsight is expected to be low—similar to early video game graphics—the company’s roadmap suggests that future iterations could eventually exceed normal human vision, potentially allowing users to see in infrared or ultraviolet wavelengths.17

Brain Fingerprinting: History, Capabilities, and Case Studies

The forensic application of brain technology, known as "brain fingerprinting" (BF), has a long and contentious history that has reached a critical juncture in 2026. This technology aims to determine if a person has "guilty knowledge" of a crime by measuring their involuntary brainwave responses to specific stimuli.25

The P300 Response and the Farwell Protocol

Brain fingerprinting, as pioneered by Dr. Lawrence Farwell, centers on the P300 response—an event-related potential (ERP) that occurs when a subject recognizes something significant.25 In a forensic setting, the subject is shown "Probes" (details only the perpetrator would know) and "Targets" (details the subject is known to know). If the brain produces a P300 response to the Probes, it indicates that the information is stored in the subject's memory.25

Farwell later introduced the "MERMER" (Memory and Encoding Related Multifaceted Electroencephalographic Response), which includes the P300 and a subsequent negative-going wave. He claimed that this refined protocol achieved 99.9% accuracy.29 However, the technology has been criticized for being vulnerable to countermeasures, such as subjects focusing on unrelated thoughts to suppress the P300 response, and for a lack of independent validation.25

Longitudinal Case Studies (1991–2026)

The legal journey of brain fingerprinting is marked by several landmark cases that demonstrate its evolving role in the justice system.

- Harrington v. State (2001, USA): In perhaps the most famous case, Terry Harrington's murder conviction was overturned in Iowa after BF evidence suggested he did not recognize details of the crime scene, while recognizing details of his alibi. While the court ruled the evidence admissible under certain standards, it remains a rare example of BF directly influencing a verdict in the U.S..25

- State v. Grinder (1999, USA): James B. Grinder confessed to a 1984 murder after a BF test indicated his brain recognized crime-relevant information. This case is often cited by proponents as a "success" of the technology as an investigative tool.27

- Aditi Sharma (2008, India): An Indian court relied on the "Brain Electrical Oscillations Signature" (BEOS) test—a variant of BF—to convict Sharma of poisoning her fiancé. The case sparked international outrage and led the Indian Supreme Court to rule in 2010 that such tests could not be administered without the subject's consent.30

- The D.B. Cooper Case (2024–2026, USA): In a sensational development, new forensic testing on a parachute found on the property of suspect Richard McCoy II was conducted between 2024 and 2025. While the FBI returned the parachute in December 2025 without a definitive public conclusion, leaked reports suggest that advanced "neural-trace" analysis was used to compare the suspect's known skydiving patterns with the artifacts found on the harness.31

- State v. Dabate (2025, USA): Known as the "Fitbit Murder," this case highlighted the shift toward multi-modal forensics. While not a brain scan case, it set a precedent for using "scientifically reliable data" from wearable technology to dismantle a defendant’s fabricated story, paving the way for more complex neural data to be admitted under similar evidentiary standards.32

The Legal Status of Brain Scans in 2026 Court Cases

As of early 2026, the admissibility of brain scans in criminal cases remains a highly contested issue, governed by a complex web of evidentiary rules and a general judicial "What now?" mentality.14

Admissibility Standards: Daubert and Frye

In the United States, most courts apply the Daubert standard, which requires that scientific evidence be helpful to the jury, based on sufficient data, and derived from reliable methods.14 In cases like United States v. Semrau, fMRI-based lie detection was excluded because its false-positive rate (often cited as 60-70%) was deemed too high for a courtroom.14

By 2026, the Massachusetts Supreme Judicial Court’s decision in Commonwealth v. Chism (2025) has become a key reference. The court excluded an sMRI brain scan of a 14-year-old defendant, ruling that a scan taken months after the crime could not reliably shed light on the defendant's state of mind at the time of the offense.14 This "temporal gap" remains the single biggest hurdle for neuroimaging in criminal law.

Federal Rule of Evidence 707 and AI Analysis

The introduction of Federal Rule of Evidence 707 in June 2025 marks the first formal attempt to regulate machine-generated evidence, including AI-analyzed brain scans.33 Rule 707 requires that any machine-generated conclusion—such as an AI’s report that a brain scan indicates "deception" or "memory recognition"—must meet the same reliability standards as a human expert under Rule 702.35

This rule prevents lawyers from presenting an AI's output as an indisputable fact without allowing for the "cross-examination" of the algorithm’s inputs and training data.37 Critics, however, argue that this creates an uneven playing field, as only wealthy litigants can afford the technical experts required to deconstruct an AI’s logic.37

Legal Rule | Impact on Brain Scans (2026) | Primary Legal Concern |

Daubert Standard | Restricts evidence to "generally accepted" methods | Reliability and error rates 14 |

Rule 403 | Allows exclusion if evidence is "too compelling" | Juror bias from colorful images 14 |

Rule 707 | Subjects AI analysis to Rule 702 scrutiny | Algorithmic "black box" problem 34 |

CCPA Amendment | Classifies neural data as "sensitive personal info" | Privacy and data ownership 40 |

Modern AI: Enhancing the Reliability of Neural Testing

To overcome the challenges of signal noise and judicial skepticism, 2026 has seen the emergence of AI-powered systems designed specifically to enhance the reliability and objectivity of brain-based tests.

Denoising and Signal Enhancement

Generative AI is now used to perform "general denoising" of EEG and fMRI data. Diffusion models, in particular, excel at this by learning to reverse a progressive noising process, which allows them to recover clean neural signals from artifacts like muscle movement or scanner noise.13 By encoding the temporal structure of neural activity into spectrograms, these models can filter out "non-stationary" interference that previously degraded BCI performance.13

Automated Assessment Platforms

The "NeuroCatch 2.0" platform represents the pinnacle of automated cognitive assessment in 2026. By integrating objective neurophysiological measures (ERPs) with cognitive-behavioral data, NeuroCatch can provide a "brain vital sign" report in just six minutes.42 This system is designed to be "impervious to user bias or test manipulation," such as malingering, because it measures direct physiological responses rather than subjective behavioral answers.28

Synthetic Data and Few-Shot Learning

Modern AI also addresses the problem of small sample sizes. Frameworks like "SynBrain" use few-shot adaptation to learn a new subject's neural patterns with minimal data, augmenting limited fMRI datasets with high-quality synthetic signals.7 This makes the reconstruction models more robust across different individuals and reduces the risk of "overfitting" to a single subject's unique brain anatomy.2

Ethical Implications and the Future of Neural Privacy

The ability to reconstruct thoughts and images directly from the brain has necessitated a fundamental shift in how we conceive of privacy. In 2024 and 2025, California led the "neurorights" movement by amending the California Consumer Privacy Act (CCPA) to include "neural data" as sensitive personal information.25

The CCPA Neural Data Amendment

Under the new 2025 regulations, neural data is defined as information generated by measuring the activity of a consumer's central or peripheral nervous system.40 Key provisions include:

- Purpose Limitation: Businesses can only use neural data for the specific purpose for which it was collected.40

- Data Deletion: Information must be deleted as soon as that purpose is accomplished.40

- Opt-Out Rights: Consumers have the right to know what neural data is being collected and to opt-out of its sale or sharing.40

This legislative framework is a direct response to the "glass box" problem—the risk that as AI makes brain activity more transparent, the most intimate parts of the human experience become commodified or used as tools for surveillance.46

Conclusion: The New Frontier of the Mind

The research and development landscape of 2026 reveals a world where the boundary between the internal mind and the external world is becoming increasingly permeable. Technologies like the Brain-IT model and DreamConnect have proven that visual perception and internal imagery are no longer "locked" within the skull; they can be reconstructed, manipulated, and shared as digital artifacts.2 Simultaneously, invasive interfaces like Neuralink are turning this science into a practical reality for those seeking to regain lost senses.17

However, the "Interface Problem" remains a significant barrier to the widespread adoption of these technologies in high-stakes environments like the courtroom. The biological constraints of fMRI resolution and the epistemic risks of AI-driven hallucination require a cautious approach to "cognitive evidence".14 The legal system’s response—embodied in Rule 707 and new privacy laws—suggests a future where neural data is treated with the same weight as DNA, but with even greater protections for the fundamental right to mental integrity.34 As we move toward 2030, the primary challenge will not be whether we can read the mind, but how we choose to govern the incredible power that comes with doing so.

Works cited

- Brain-IT: Image Reconstruction from fmri via Brain-Interaction Transformer - arXiv.org, accessed March 4, 2026, https://arxiv.org/html/2510.25976v1

- Brain-IT: Image Reconstruction from fmri via Brain-Interaction Transformer - arXiv, accessed March 4, 2026, https://arxiv.org/html/2510.25976v2

- Stable Diffusion with Brain Activity, accessed March 4, 2026, https://sites.google.com/view/stablediffusion-with-brain/

- Decoding dreams with AI: how the brain reveals what we dream about. - Soy Marta Gan, accessed March 4, 2026, https://soymartagan.com/en/decoding-dreams-with-ai/

- NeuroFusionNet: cross-modal modeling from brain activity to visual understanding, accessed March 4, 2026, https://www.frontiersin.org/journals/computational-neuroscience/articles/10.3389/fncom.2025.1545971/full

- Deep image reconstruction from human brain activity - PMC, accessed March 4, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC6347330/

- SynBrain: Enhancing Visual-to-fMRI Synthesis via Probabilistic Representation Learning, accessed March 4, 2026, https://openreview.net/forum?id=ZTHYaSxqmq

- Decoding the brain: from neural representations to mechanistic models - PMC, accessed March 4, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11637322/

- [2510.25976] Brain-IT: Image Reconstruction from fMRI via Brain-Interaction Transformer, accessed March 4, 2026, https://arxiv.org/abs/2510.25976

- Researchers develop an AI system to modify the brain's mental imagery with words, accessed March 4, 2026, https://dig.watch/updates/researchers-develop-an-ai-system-to-modify-the-brains-mental-imagery-with-words

- From mind to image: Guiding dreams with AI | EurekAlert!, accessed March 4, 2026, https://www.eurekalert.org/news-releases/1096544

- Decoding the Dreams into a Coherent Video Story from fMRI Signals 2 - 2 2Work in progress, accessed March 4, 2026, https://arxiv.org/html/2501.09350v1

- Advancing brain-computer interfaces with generative AI: A review of ..., accessed March 4, 2026, https://www.the-innovation.org/article/doi/10.59717/j.xinn-life.2026.100198

- Neuroimaging Evidence in Criminal Cases - Law and Biosciences ..., accessed March 4, 2026, https://law.stanford.edu/2026/02/13/neuroimaging-evidence-in-criminal-cases/

- (PDF) An Evidence-Based Warning about the AI 2026 Problem: False-Correction Loops, Epistemic Lock-in, and the Need for FCL-S Cross-Ecosystem Evidence for the False-Correction Loop and the Systemic Suppression of Novel - ResearchGate, accessed March 4, 2026, https://www.researchgate.net/publication/398529834_An_Evidence-Based_Warning_about_the_AI_2026_Problem_False-Correction_Loops_Epistemic_Lock-in_and_the_Need_for_FCL-S_Cross-Ecosystem_Evidence_for_the_False-Correction_Loop_and_the_Systemic_Suppression_

- Visual Prosthesis | Neuralink, accessed March 4, 2026, https://neuralink.com/trials/visual-prosthesis/

- Inside Blindsight: Elon Musk's ambitious project to cure blindness in 2026 - India Today, accessed March 4, 2026, https://www.indiatoday.in/science/story/neuralink-blindsight-elon-musk-brain-chip-brain-computer-interface-blind-visually-impaired-retina-optic-nerve-restore-vision-visual-cortex-fda-2842936-2026-01-03

- Neuralink to Begin 'High-Volume Production' of Brain Chips in 2026, Musk Says - Fintool, accessed March 4, 2026, https://fintool.com/news/neuralink-mass-production-2026

- Neuralink Goes Mass Production, Surgery Now Fully Automated - Gotrade, accessed March 4, 2026, https://www.heygotrade.com/en/news/neuralink-goes-mass-production-surgery-now-fully-automated

- Neuralink prepares first human trials of Blindsight implant, accessed March 4, 2026, https://www.thenews.com.pk/latest/1390244-neuralink-prepares-first-human-trials-of-blindsight-implant

- Elon Musk: Neuralink to boost production, automate surgeries - Becker's Hospital Review, accessed March 4, 2026, https://www.beckershospitalreview.com/healthcare-information-technology/innovation/elon-musk-neuralink-to-boost-production-automate-surgeries/

- Wireless retinal implant helps blind patients see again - ScienceDaily, accessed March 4, 2026, https://www.sciencedaily.com/releases/2026/03/260302030640.htm

- Technology | Neuralink, accessed March 4, 2026, https://neuralink.com/technology/

- Blind Korean YouTuber volunteers for Musk's Neuralink vision trial - The Korea Times, accessed March 4, 2026, https://www.koreatimes.co.kr/business/tech-science/20260304/blind-korean-youtuber-volunteers-for-musks-neuralink-vision-trial

- Brain Fingerprinting: Can Science Really Read Your Mind? | by Santosh Srinivasaiah | Jan, 2026 | Medium, accessed March 4, 2026, https://medium.com/@srinivas.santosh/brain-fingerprinting-can-science-really-read-your-mind-73a1fb6275a4

- 'Brain fingerprinting' has crime-solving potential for New Zealand, pilot study finds, accessed March 4, 2026, https://lawfoundation.org.nz/?p=8026

- Brain fingerprinting - Wikipedia, accessed March 4, 2026, https://en.wikipedia.org/wiki/Brain_fingerprinting

- Technology - NeuroCatch, accessed March 4, 2026, https://www.neurocatch.com/technology/

- Palmer, Robin --- "Time to take brain-fingerprinting seriously? A consideration of international developments in Forensic Brainwave Analysis (FBA), in the context of the need for independent verification of FBA's scientific validity, and the potential legal implications of its use in New Zealand" [2017] NZCrimLawRw 22 - NZLII, accessed March 4, 2026, https://www.nzlii.org/nz/journals/NZCrimLawRw/2017/22.html

- Evidence of memory from brain data - PMC - NIH, accessed March 4, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC8249088/

- Part 4: Who Is D.B. Cooper? FBI's 'One-In-A-Billion' Parachute Returns | Cowboy State Daily, accessed March 4, 2026, https://cowboystatedaily.com/2026/02/22/fbis-one-in-a-billion-parachute-returns-and-revives-d-b-cooper-mystery/

- Real-World Impact: Digital Forensics Case Studies and Conclusion, accessed March 4, 2026, https://lucidtruthtechnologies.com/digital-forensics-case-studies/

- A Collision Course in Evidentiary Standards for AI-Assisted Financial Forensics - QuickRead, accessed March 4, 2026, https://quickreadbuzz.com/2026/02/11/dorothy-haraminac-ai-assisted-financial-forensics/

- Proposed New Federal Rule Regarding AI-Generated Evidence - Meyers | Nave, accessed March 4, 2026, https://www.meyersnave.com/proposed-new-federal-rule-regarding-ai-generated-evidence/

- Proposed New FRE 707 | Law Library | University of Illinois Chicago, accessed March 4, 2026, https://library.law.uic.edu/news-stories/proposed-new-fre-707/

- Safeguarding the Courtroom from AI-Generated Evidence: Federal Rule of Evidence 707 Approved by Judicial Conference - Nelson Mullins, accessed March 4, 2026, https://www.nelsonmullins.com/insights/blogs/red-zone/news/safeguarding-the-courtroom-from-ai-generated-evidence-federal-rule-of-evidence-707-approved-by-judicial-conference

- Man vs. Machine: Proposed Federal Rule of Evidence 707 Aims to Combat Artificial Intelligence Usage in the Courtroom Through Expert Testimony Standards - Villanova Law Review, accessed March 4, 2026, https://www.villanovalawreview.com/post/3458-man-vs-machine-proposed-federal-rule-of-evidence-707-aims-to-combat-artificial-intelligence-usage-in-the-courtroom-through-expert-testimony-standard

- Evidence Rule 707 Joint Comment Final - ACM, accessed March 4, 2026, https://downloads.regulations.gov/USC-RULES-EV-2025-0034-0058/attachment_1.pdf

- Should Brain Scans Be Used As Evidence in Trademark Litigation? - Petrie-Flom Center, accessed March 4, 2026, https://petrieflom.law.harvard.edu/2023/03/31/should-brain-scans-be-used-as-evidence-in-trademark-litigation/

- Brain-computer interfaces: neural data - SENATE HEALTH, accessed March 4, 2026, https://sjud.senate.ca.gov/system/files/2025-04/sb-44-umberg-sjud-analysis.pdf

- California Amends CCPA to Cover Neural Data and Clarify Scope of Personal Information, accessed March 4, 2026, https://www.hunton.com/privacy-and-cybersecurity-law-blog/california-amends-ccpa-to-cover-neural-data-and-clarify-scope-of-personal-information

- NeuroCatch: Home, accessed March 4, 2026, https://www.neurocatch.com/

- The NeuroCatch Platform for Healthcare - Rackcdn.com, accessed March 4, 2026, https://7157e75ac0509b6a8f5c-5b19c577d01b9ccfe75d2f9e4b17ab55.ssl.cf1.rackcdn.com/RWSMEYDL-PDF-3-589724-448597622.pdf

- A Practical and Accessible Measure of Brain Vital Signs: the NeuroCatch® Platform - Rackcdn.com, accessed March 4, 2026, https://7157e75ac0509b6a8f5c-5b19c577d01b9ccfe75d2f9e4b17ab55.ssl.cf1.rackcdn.com/RWSMEYDL-PDF-2-589724-448597622.pdf

- Data Privacy Update | White & Case LLP, accessed March 4, 2026, https://www.whitecase.com/insight-alert/data-privacy-update-2025

- TechDispatch #1/2024 - Neurodata | European Data Protection Supervisor, accessed March 4, 2026, https://www.edps.europa.eu/data-protection/our-work/publications/techdispatch/2024-06-03-techdispatch-12024-neurodata_en

- AI Reveals How Brain Activity Unfolds Over Time | Stanford HAI, accessed March 4, 2026, https://hai.stanford.edu/news/ai-reveals-how-brain-activity-unfolds-over-time