A Survival Guide to Data

by Gemma Mindell

We live in an era where a peer-reviewed, longitudinal study involving ten thousand participants is frequently countered by the ultimate rhetorical nuclear weapon: “Well, that’s not what happened to my cousin’s roommate, and he eats a lot of kale.”

There is a growing, whimsical trend of treating statistics like a Choose Your Own Adventure novel. If the data suggests that your favorite hobby—say, competitive unicycling—is bad for your knees, the common response isn’t to examine the p-value. It’s to declare that “numbers are just opinions with hats on” and go back to pedaling.

Let’s explore this grand misunderstanding of how we know what we know, and why “Logic” shouldn’t be a dirty word.

1. The “Lies, Damned Lies, and My Intuition” Fallacy

Public skepticism of statistics usually stems from a traumatic event involving a high school algebra teacher or a confusing weather report. This has led to the popular mantra: “You can make statistics say anything!”

While technically true—I could arguably use statistics to prove that 100% of people who drink water eventually die—this doesn’t mean the tool is broken. It means the carpenter is a prankster. Dismissing all statistics because some are manipulated is like refusing to use a map because you once saw a drawing of Middle-earth. One is a navigation tool; the other is a fantasy.

The Power of the N of 1

The most common rival to the statistical method is Anecdotal Evidence. We love a good story. If a study says that a specific medication has a 95% success rate, but your Aunt Brenda took it and started seeing ghosts, the public doesn’t see “5% variance.” They see “Brenda’s Ghost-Pills.”

In the scientific world, Aunt Brenda is an “outlier.” In the court of public opinion, she is the star witness for the prosecution. We must remember that while Brenda’s ghosts are fascinating at Thanksgiving, they do not constitute a representative sample.

2. Research Methods: The “Vibe Check” vs. The Rules

People often dismiss research as “ivory tower nonsense” because they don’t realize that Research Methods are essentially just a very formal, very expensive way of making sure we aren’t lying to ourselves.

When a study is “invalid,” it isn’t usually a deep-state conspiracy; it’s usually just bad housekeeping. To understand why a study deserves our respect (or a one-way trip to the shredder), we have to look at the ingredients.

The Sampling Snafu

Imagine I want to know the average height of humans. If I only measure the starting lineup of the Golden State Warriors, my data will suggest that the average human is 6’7″ and excellent at three-pointers.

The Red Flag: If a study claiming to represent “All Americans” only surveyed people at a luxury yacht club in the Hamptons on a Tuesday morning, the results aren’t “false”—they’re just only applicable to people who own yachts and don’t work on Tuesdays.

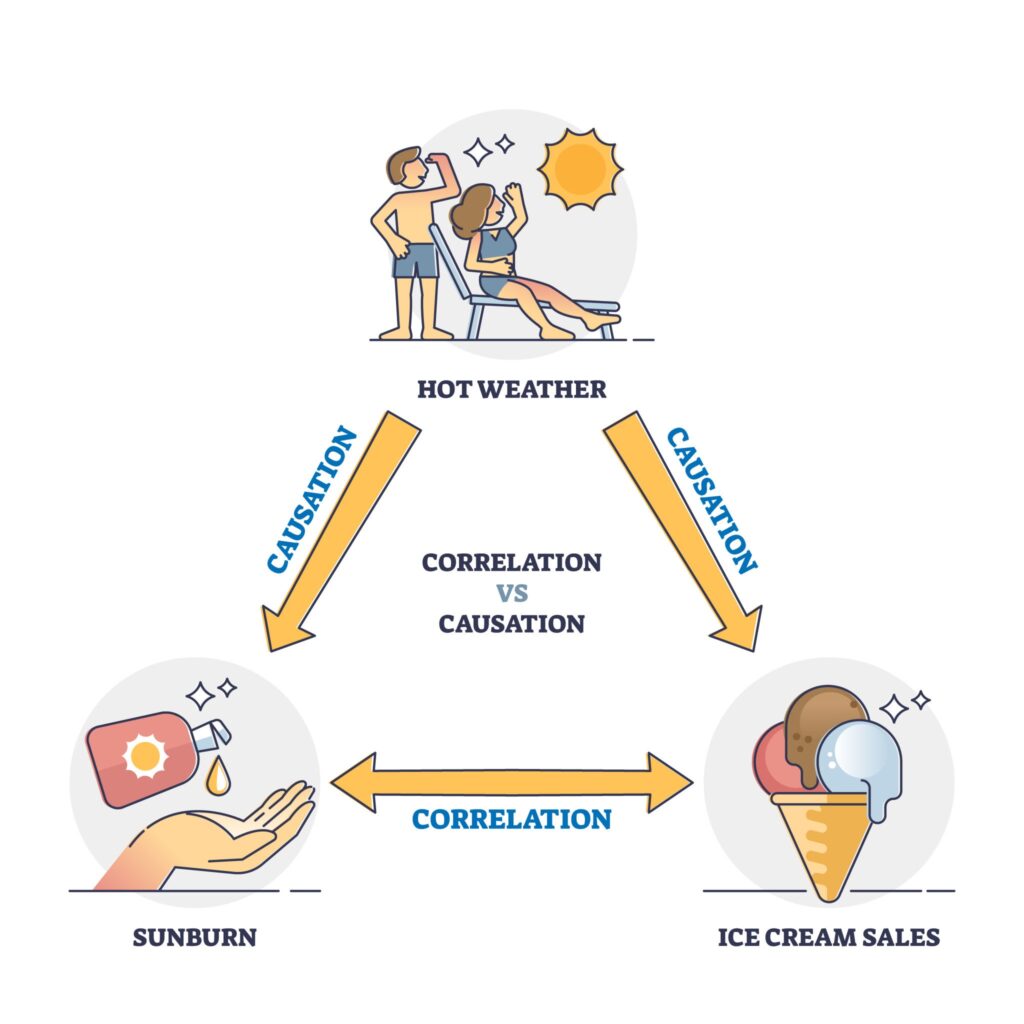

The Correlation Carousel

We’ve all seen the headlines: “Eating Cheese Linked to High Intelligence.” We want to believe this because cheese is delicious. However, valid research methods require us to ask if the cheese caused the smarts, or if smart people simply have the disposable income to afford the “fancy” Gruyère.

The public tends to skip the “how” and “why” and goes straight to the “I’m buying a block of cheddar to pass my CPA exam.” When we dismiss studies because they “change their minds all the time,” we are actually watching the scientific method work. It’s not “changing your mind”; it’s “updating your software.”

3. The Art of the Statistical Smokescreen

Now, let’s be fair to the skeptics: statistics can be used for mischief. This is where the “biased viewpoint” comes in. If you aren’t looking closely, a graph can lie to your face while smiling.

The Y-Axis Magic Trick

If I want to show a “massive explosion” in the price of coffee, I can make a graph where the Y-axis starts at $4.95 and ends at $5.00. A five-cent jump will look like a vertical climb to the moon.

Technically, the numbers are accurate. Morally? It’s the visual equivalent of a jump-scare in a horror movie. Learning to check the scale of a graph is the statistical equivalent of checking the fine print on a “Free Cruise” flyer.

The “Significant” Misunderstanding

In the real world, “significant” means “important.” In statistics, “significant” just means “this probably didn’t happen by total accident.”

If a study finds a statistically significant increase in hair growth from a new cream, it might mean participants grew three extra hairs. Is it significant to a mathematician? Yes! Is it significant to a bald man? Absolutely not. When the public sees “Significant Results,” they expect a miracle, and when they get three hairs, they scream that science is a scam.

4. How to Spot a “Dud” (Without a PhD)

You don’t need to be a grandmaster of calculus to identify a suspicious study. You just need to be a professional skeptic. Here is the “Sniff Test” for the modern graduate:

Who paid for the party? If a study finding that “Sugar is actually a vegetable” was funded by the “Association of Gummy Bear Manufacturers,” you might want to take it with a grain of… well, sugar.

The “Double-Blind” Date: Was the study double-blind? If the researchers knew who was getting the “Real Medicine” and who was getting the “Sugar Pill,” they might subconsciously cheer for the medicine, ruining the results.

Sample Size Matters: If a study says “80% of people prefer Brand X,” look for the small print. If they only asked five people, and one of them was the CEO’s mom, that 80% is functionally meaningless.

5. The Tragedy of the Dismissive Mindset

The real danger isn’t that people are bad at math; it’s that we’ve made “doubting everything” a personality trait. When we dismiss valid research because it’s inconvenient or contradicts our “gut feeling,” we lose our grip on reality.

Your gut is great for deciding if you should eat that third taco. It is terrible at determining the efficacy of carbon sequestration or the long-term effects of interest rate hikes.

By applying the rules of Technical Evaluation, we don’t have to be victims of “fake news” or “manipulated stats.” We can look at a study, see that the p-value is 0.05, the sample size is 2,000, the methodology was peer-reviewed, and say: “I don’t like what this says, but it’s probably true.” That is the hallmark of a true education.

Conclusion: Empathy for the Numerophobic

It’s easy to poke fun at the person who thinks a “Standard Deviation” is a fancy way of getting lost on a road trip. Statistics are intimidating. They represent a world that is messy, probabilistic, and rarely offers the black-and-white certainty we crave.

But as college graduates, we have a responsibility. We can’t just throw the baby out with the bathwater (especially since there’s no statistical evidence that babies enjoy being thrown). We must be the ones to explain that a “Margin of Error” isn’t a “Mistake”—it’s an admission of honesty.

The next time you hear someone dismiss a study because “you can’t trust the experts,” remind them that while experts can be wrong, they are usually “wrong” in a way that is documented, measured, and eventually corrected by more research. The “Guy I Know,” on the other hand, is usually just loud.

So, let us toast to the humble statistic: frequently misunderstood, occasionally manipulated, but ultimately the only thing standing between us and a world governed by Aunt Brenda’s ghosts.

The Statistical Red Flags Checklist

To help you navigate the sea of “breakthrough studies” and “shocking data,” here is a quick-reference guide. Use this the next time a headline makes a claim that seems too good (or too weird) to be true.

1. The “Who’s Buying?” Test (Funding Bias)

The Red Flag: The study is funded by an organization that directly benefits from the result.

The Reality: Conflict of interest doesn’t always mean the data is fake, but it does mean the researchers might have “cherry-picked” the most flattering results while burying the boring or negative ones.

2. The “N” Factor (Sample Size)

The Red Flag: The study uses a tiny group (e.g., “12 college students”) to make a claim about the entire human race.

The Reality: Small samples lead to high volatility. You need a large enough “N” (sample size) to ensure that the results aren’t just a fluke.

3. The “Relative vs. Absolute” Risk

The Red Flag: “New snack increases heart disease risk by 50%!”

The Reality: If the original risk was 2 in 1,000,000 and it rose to 3 in 1,000,000, that is a 50% increase—but your actual risk is still basically zero. Always ask for the absolute numbers.

4. The Spurious Correlation

The Red Flag: Two unrelated things moving in the same direction.

The Reality: Just because ice cream sales and shark attacks both go up in June doesn’t mean Rocky Road causes Great White bites. It means it’s summer.

5. Data Dredging (P-Hacking)

The Red Flag: The study measures 50 different variables and only one shows a result.

The Reality: If you test enough random things, eventually one will show a “statistically significant” connection just by sheer luck. This is how we get studies claiming that “jellybeans cause acne.”

6. The Visual Deception (Truncated Graphs)

The Red Flag: A bar chart or line graph where the Y-axis starts at a high number (like 90) instead of 0.

The Reality: This is designed to make a 1% difference look like a 500% difference. Always look at the axis labels before reacting to the shape of the line.