The Algorithmic Kill Chain: A Comprehensive Analysis of Project Maven’s Evolution, Ethical Imperatives, and Strategic Equilibrium

The character of modern warfare is undergoing a fundamental transformation, shifting from a paradigm of kinetic endurance to one of algorithmic speed. At the epicenter of this shift is Project Maven, formally designated as the Algorithmic Warfare Cross-Functional Team (AWCFT). Established in 2017, this initiative represents the Department of Defense’s (DoD) premier "pathfinder" program, designed to harness the power of artificial intelligence (AI) and machine learning (ML) to maintain a decisive strategic advantage over increasingly capable peer and near-peer adversaries.1 This report provides an exhaustive examination of Project Maven, detailing its historical foundations, the profound ethical controversies it has ignited, its current operational integration within global conflict zones, and a forward-looking forecast of its role within the burgeoning architecture of Combined Joint All-Domain Command and Control (CJADC2).

Historical Foundations and the Third Offset Strategy

The genesis of Project Maven was not an isolated technological experiment but a calculated response to a looming strategic crisis. In the mid-2010s, senior defense leadership observed that the sheer volume of data generated by the United States’ vast network of Intelligence, Surveillance, and Reconnaissance (ISR) sensors had reached a point of "cognitive saturation" for human analysts.3 By 2017, the Department was collecting millions of hours of video footage that went largely unexploited because the human workforce was physically incapable of scanning it in real-time.3

On April 26, 2017, then-Deputy Secretary of Defense Robert O. Work issued a memorandum establishing the AWCFT.3 The objective was to turn "enormous volumes of data" into "actionable intelligence and insights at speed".3 This was a core component of the "Third Offset Strategy," which posited that AI and autonomous systems would define the next generation of warfare, much as nuclear weapons and precision-guided munitions had in previous eras.5

The Initial Mandate and Rapid Prototyping

Project Maven’s first operational task was highly specific: improving the Processing, Exploitation, and Dissemination (PED) of full-motion video (FMV) from tactical Unmanned Aerial Systems (UAS) in support of the defeat-ISIS campaign.1 The program adopted a "rapid acquisition" model, utilizing 90-day sprints to integrate commercial AI capabilities into existing military programs of record.3 This model broke the traditional defense contracting mold by aggressively seeking talent from the commercial tech sector, facilitated by the Defense Innovation Unit (DIU).7

Evolution of Technical Scope (2017–2022)

While the project began with drone video, its scope rapidly expanded to include a wide array of data sources. By 2019, Maven was developing algorithms for Wide Area Motion Imagery (WAMI), Synthetic Aperture Radar (SAR), and Overhead Persistent Infrared (OPIR) systems.2 The technical goal was to create a "federated architecture" of learning analytical engines that could operate across land, sea, air, cyber, and space domains.1

Developmental Phase | Primary Technology Focus | Strategic Objective |

Phase I (2017) | Computer Vision for FMV | Automate object detection (persons, vehicles, weapons) for counter-ISIS ops.2 |

Phase II (2018-2019) | Multi-Sensor Fusion | Integrate SAR, WAMI, and EO imagery to support the National Defense Strategy.2 |

Phase III (2020-2022) | AI at the Edge | Deploy algorithms directly onto sensor platforms to reduce latency.2 |

Phase IV (2023-Present) | LLM and Generative AI | Integrate large language models for planning, analysis, and target prioritization.7 |

The Crisis of Ethical Participation: The Google Controversy

The most significant early hurdle for Project Maven was not technical, but social and ethical. In late 2017, details of Google’s involvement as a primary provider of the project’s machine learning infrastructure—specifically the use of its TensorFlow platform—began to circulate internally among its employees.8

The "Never Again" Pledge and Employee Revolt

The internal dissent at Google was catalyzed by a broader politicization of the tech workforce following the 2016 U.S. presidential election. Many workers had signed the "Never Again" pledge, promising to refuse to build databases that identified people by race, religion, or national origin.8 When it became clear that Google’s AI was being used to "improve targeting for drone strikes," thousands of employees signed an internal petition demanding the cancellation of the contract.8

Protesting workers voiced several core concerns:

- Fundamental Pacifism: The belief that "Google should not be in the business of war".10

- Automation of Lethality: Fear that AI-assisted targeting was a "foot in the door" for fully autonomous weapon systems where a computer makes the decision to kill.11

- Lack of Transparency: Allegations that Google leadership had downplayed the offensive nature of the project.8

By June 2018, the pressure became untenable. Google announced it would not renew its contract for Project Maven and subsequently released a set of "AI Principles" that prohibited the development of technology for use in weapons.10 This exit forced the DoD to pivot toward a new consortium of defense-focused tech firms, most notably Palantir Technologies and later Anduril and Anthropic.7

Current Picture: Algorithmic Warfare in Global Conflicts

As of 2024–2026, Project Maven has transitioned from an experimental "pathfinder" into a permanent "Program of Record" administered jointly by the National Geospatial-Intelligence Agency (NGA) and the Chief Digital and Artificial Intelligence Office (CDAO).7 Its current iteration, often centered on the "Maven Smart System" (MSS), is actively shaping the outcomes of major global conflicts.

The War in Ukraine and Russian Attrition

Project Maven’s role in the Ukraine conflict has been pivotal. Working with the XVIII Airborne Corps, Maven integrates data from a sparse network of sensors to create a high-fidelity "virtual representation" of the battlefield.1 This situational awareness has allowed Ukrainian forces to identify and track Russian troop movements with unprecedented precision. Notably, Maven is credited with providing the intelligence that enabled the precision targeting of General Valery Gerasimov on May 1, 2022.1

Operation Epic Fury and the 2026 Iran War

In the most dramatic application of algorithmic warfare to date, the Maven Smart System served as the backbone for Operation Epic Fury in early 2026. This operation demonstrated the scalability of AI-enabled targeting:

- Targeting Scale: Maven facilitated the striking of over 1,000 targets in the first 24 hours of the conflict.7

- Decision Support: The system used Anthropic’s Claude 3.5 models to synthesize intelligence reports and suggest the most effective military force to apply to each target.7

- Outcome: The strikes reportedly decimated Iranian military leadership across 24 provinces and resulted in the death of Supreme Leader Khamenei.15

The Anthropic Supply Chain Pivot (March 2026)

The current picture is also defined by shifting geopolitical risks. On March 4, 2026, the DoD designated Anthropic as a "supply chain risk" and ordered the phasing out of Claude from military systems within six months.7 This decision underscores the Department's growing concern that dependencies on commercial AI providers with complex international ties could become a vulnerability in a high-end conflict.13

Performance Metrics: The Kill Chain Revolution

The operational success of Project Maven is measurable through radical improvements in the speed and efficiency of the "kill chain"—the process of finding, fixing, tracking, targeting, engaging, and assessing an adversary.

Metric | Traditional (Manual) | Maven Smart System (2024-2026) |

End-to-End Targeting Time | ~743 minutes (2021 baseline) 7 | <1 minute 7 |

Targets Processed per Hour | ~30 targets per analyst 7 | ~80 targets per analyst 7 |

Targeting Cell Size | ~2,000 personnel (Iraq 2003) 7 | ~20 personnel (Scarlet Dragon 2023) 7 |

Machine-Generated Intel | Negligible | 100% (Projected for June 2026) 7 |

Deep Ethical Discussion: The Moral Texture of Algorithmic War

The integration of AI into lethal decision-making processes raises profound ethical questions that transcend simple compliance with International Humanitarian Law (IHL). These concerns can be analyzed through the lenses of dehumanization, responsibility gaps, and automation bias.

Dehumanization: The Reduction to Data Points

A central ethical critique of Project Maven is its capacity to "dehumanize" the target. By reducing human beings to "data points" or yellow-outlined boxes on a digital interface, the technology may erode the psychological barriers to killing.16 Scholars identify two distinct mechanisms of dehumanization in this context:

- Mechanistic Dehumanization: Derived from a "cold" cognitive micro-foundation, this denies targets human qualities like critical thinking and agency, treating them instead as objects to be "processed".16

- Animalistic Dehumanization: An affective mechanism where targets are viewed as "inferior" through the lens of biased training data, which may carry racial or gender prejudices.16

The Responsibility Gap and Human Accountability

The "Responsibility Gap" refers to the difficulty of assigning legal or moral blame when an AI system contributes to an unintended outcome, such as the killing of a civilian like Abdul-Rahman al-Rawi in Iraq in 2024.14 If an algorithm identifies a target and a human operator merely approves the recommendation due to time pressure, who is responsible for a mistake?

- The Operator: Used the system as designed but failed to catch a subtle error.

- The Developer: Created a model with inherent biases or failure modes.

- The Commander: Deployed a system with known accuracy limitations (e.g., Maven's accuracy dropping below 30% in desert terrain).14

As AI takes over four of the six steps of the kill chain, the human role risks becoming a "moral buffer" that provides legal cover without exercising meaningful judgment.7

Automation Bias and De-skilling

Automation bias is the human tendency to over-trust the output of a computer.18 In high-stress combat environments, this bias is amplified by "cognitive offloading," where analysts stop critically evaluating the sensor data because the AI is "usually right." This leads to "human de-skilling," where the workforce loses the ability to perform manual identification and verification, creating a systemic vulnerability if the AI is spoofed or fails.14

Analytical Game Theory-Based Cost-Benefit Analysis

The adoption of Project Maven and the broader AI arms race can be effectively modeled using game theory to understand the rational (and irrational) incentives driving military escalation.

The Prisoner’s Dilemma of AI Militarism

The competition between the United States and China for AI supremacy is a classic Prisoner’s Dilemma. Both states recognize that a world without autonomous weapons would be safer and more stable (the "Cooperate" outcome). However, the "Defect" strategy (accelerated development) is the dominant strategy because neither side can trust the other to exercise restraint.19

In this game, the payoff matrix is heavily skewed by the First-Mover Advantage. If one side achieves a "Singleton"—a super-intelligent AI capable of preventing any other system from challenging it—they gain absolute global dominance.21 The fear of being on the losing side of this "winner-take-all" scenario makes the downside of restraint effectively infinite.21

Mathematical Modeling of the AI Arms Race

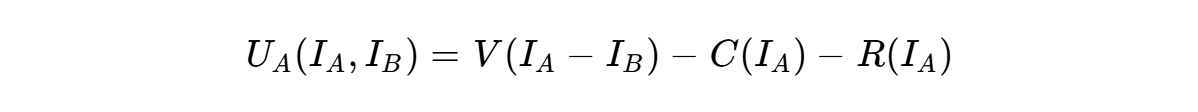

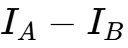

We can represent the utility ( ) of AI investment (

) of AI investment ( ) for states

) for states  and

and  :

:

Where:

is the perceived strategic value of the relative advantage (

is the perceived strategic value of the relative advantage ( ).

). is the direct economic cost of development.

is the direct economic cost of development. is the existential risk (e.g., accidental escalation, AI misalignment).

is the existential risk (e.g., accidental escalation, AI misalignment).

Because  is non-linear and grows exponentially as

is non-linear and grows exponentially as  surpasses

surpasses  , the incentive is always to increase

, the incentive is always to increase  regardless of the growth of

regardless of the growth of  and

and  . This creates a Nash Equilibrium of mutual defection where both sides spend trillions on systems that ultimately make both less secure.21

. This creates a Nash Equilibrium of mutual defection where both sides spend trillions on systems that ultimately make both less secure.21

Cost-Benefit Analysis (CBA)

A comprehensive CBA must weigh the tactical benefits against the systemic risks.

Category | Benefits | Costs and Risks |

Tactical/Operational | Decision advantage; radical reduction in sensor-to-shooter timelines; protection of service members.5 | Civilian casualties from algorithmic error; vulnerability to adversarial attacks on ML models.14 |

Economic | Increased productivity of the intel workforce; capitalization on existing sensor data.4 | Massive capital expenditure on compute ($470.9B US budget for AI in 2025); high cost of high-end GPUs.21 |

Strategic/Existential | Deterrence of peer adversaries; maintenance of technological "Offset".1 | Accidental escalation at "computer speed"; erosion of human control over the start of wars.19 |

Institutional | Bureaucratic expansion (AWCFT, JAIC, CDAO); lucrative career transitions for officials.22 | Organizational "Parkinson’s Law" where bureaucracy expands to fit the budget.22 |

The analysis suggests that while the individual cost-benefit ratio for a single military engagement favors Maven, the collective global cost-benefit is negative-sum, as the race consumes resources that could be spent on global challenges like climate change or pandemic preparedness.21

Future Forecast: The Intelligentized Military

The long-term vision for Project Maven is its evolution from a data-processing tool into the core operating system of the "intelligentized military".23

Integration with CJADC2 and ShipOS

By 2030, the Pentagon aims to realize the vision of Combined Joint All-Domain Command and Control (CJADC2), where every sensor on a ship, aircraft, or satellite is seamlessly connected to every shooter via an AI mesh.25 Project Maven is the foundational software for this "Enterprise C2 Suite".27 Related projects, such as the Navy-Palantir "ShipOS," are directly informed by Maven’s ontology and data management breakthroughs.27

The Shift to Predictive AI

Future iterations of Maven will move beyond object identification into "Predictive AI." These systems will analyze historical patterns and real-time data to predict the likelihood of enemy movements or attacks before they happen.23 This "pre-emptive" capability could fundamentally alter the OODA (Observe-Orient-Decide-Act) loop, allowing the U.S. to operate "inside" the adversary's decision cycle.23

Total Machine-Generated Intelligence

A critical milestone is scheduled for June 2026, when the NGA plans to begin transmitting "100 percent machine-generated" intelligence to combatant commanders.7 This represents a shift toward a "software-centric organization" where AI is no longer just an assistant but the primary producer of military knowledge.23

Conclusion: Navigating the Algorithmic Threshold

Project Maven has traveled a remarkable distance from a 2017 memo about drone video to the 2026 reality of AI-driven regime change. Its success in accelerating the kill chain is undeniable, yet this very speed introduces a new class of existential risk. The "Prisoner's Dilemma" of the AI arms race suggests that the technological momentum is likely to continue unabated, driving the military toward ever-greater degrees of autonomy.

To prevent this momentum from resulting in catastrophic miscalculation, the Department of Defense must prioritize the development of "AI Safety" and "Verification" frameworks that are as robust as the targeting algorithms themselves. The goal of future defense policy should not be to "win" the AI race in a zero-sum sense, but to restructure the payoff matrix of global competition through transparency mechanisms, international treaties, and human-centric guardrails. Only by doing so can the U.S. ensure that its algorithmic tools remain servants of human strategy, rather than the masters of human fate.

Works cited

- AI in Real-Time Warfare: Lessons from Project Maven, accessed March 17, 2026, https://www.orfonline.org/expert-speak/ai-in-real-time-warfare-lessons-from-project-maven

- Algorithmic Warfare Cross Functional Team (AWCFT) - Minsky DTIC, accessed March 17, 2026, https://dtic.minsky.ai/590_0307588D8Z_6_0400_PB_2022/text

- Project Maven, accessed March 17, 2026, https://dodcio.defense.gov/Portals/0/Documents/Project%20Maven%20DSD%20Memo%2020170425.pdf

- Project Maven to Deploy Computer Algorithms to War Zone by Year's End, accessed March 17, 2026, https://www.war.gov/News/News-Stories/Article/Article/1254719/project-maven-to-deploy-computer-algorithms-to-war-zone-by-years-end/

- Pentagon AI chief praises Palantir tech for speeding battlefield strikes - The Register, accessed March 17, 2026, https://www.theregister.com/2026/03/13/palantirs_maven_smart_system_iran/

- The Pursuit of AI Is More Than an Arms Race - CNAS, accessed March 17, 2026, https://www.cnas.org/publications/commentary/the-pursuit-of-ai-is-more-than-an-arms-race

- Project Maven - Wikipedia, accessed March 17, 2026, https://en.wikipedia.org/wiki/Project_Maven

- Tech Workers Versus the Pentagon - Jacobin, accessed March 17, 2026, https://jacobin.com/2018/06/google-project-maven-military-tech-workers

- Artificial Intelligence: Implications for Strategy and Power, accessed March 17, 2026, https://nps.edu/documents/115153495/162964388/16-maness+%28strategy%29.pdf/06d1ef1f-753e-5a5d-6aec-5a79911c45e4?t=1770146073727

- Google Employees Push Back on Government Surveillance Contracts | TechPolicy.Press, accessed March 17, 2026, https://www.techpolicy.press/google-employees-push-back-on-government-surveillance-contracts

- Amid pressure from employees, Google drops Pentagon's Project Maven account - PBS, accessed March 17, 2026, https://www.pbs.org/newshour/show/amid-pressure-from-employees-google-drops-pentagons-project-maven-account

- Case study: Google Workers & Project Maven - The Turing Way, accessed March 17, 2026, https://book.the-turing-way.org/ethical-research/activism/activism-case-study-google/

- Emerge's 2025 Story of the Year: How the AI Race Fractured the Global Tech Order - DizainoArkliukas.LT, accessed March 17, 2026, https://dizainoarkliukas.lt/?p=5743

- 'We want to use it for everything': How Project Maven became ..., accessed March 17, 2026, https://www.independent.co.uk/news/world/americas/project-maven-ai-us-airstrike-iraq-anthropic-b2929138.html

- Let's face it: There were never going to be guardrails on military AI. : r/Anthropic - Reddit, accessed March 17, 2026, https://www.reddit.com/r/Anthropic/comments/1ri0b4k/lets_face_it_there_were_never_going_to_be/

- Dehumanization and Public Support for Emerging Technologies on the Battlefield, accessed March 17, 2026, https://perryworldhouse.upenn.edu/news-and-insight/dehumanization-and-public-support-for-emerging-technologies-on-the-battlefield/

- The Ethics of Acquiring Disruptive Technologies: Artificial Intelligence, Autonomous Weapons, and Decision Support Systems - NDU Press, accessed March 17, 2026, https://ndupress.ndu.edu/Media/News/News-Article-View/Article/2054156/the-ethics-of-acquiring-disruptive-technologies-artificial-intelligence-autonom/

- The Algorithmic Battlefield: How AI Systems Like Lavender and Project Maven Are Changing Modern War | by Hamid Mujtaba | Mar, 2026 | Medium, accessed March 17, 2026, https://medium.com/@hamipirzada/the-algorithmic-battlefield-how-ai-systems-like-lavender-and-project-maven-are-changing-modern-war-ef38e0555b21

- The Prisoner's Dilemma of AI Militarism - Modern Diplomacy, accessed March 17, 2026, https://moderndiplomacy.eu/2025/09/24/the-prisoners-dilemma-of-ai-militarism/

- A Prisoner's Dilemma in the Race to Artificial General Intelligence - RAND, accessed March 17, 2026, https://www.rand.org/pubs/research_reports/RRA4245-1.html

- The insane “logic” of the AI arms race | by Tam Hunt | Medium, accessed March 17, 2026, https://tamhunt.medium.com/the-insane-logic-of-the-ai-arms-race-45a5f79f4c0e

- The Hidden Logic Behind the AI Arms Race: Why Everyone Loses ..., accessed March 17, 2026, https://medium.com/@gerrit1971/the-hidden-logic-behind-the-ai-arms-race-why-everyone-loses-but-no-one-stops-901d0c7762f0

- Reimagining Military C2 in the Age of AI – Revolution ... - SCSP, accessed March 17, 2026, https://www.scsp.ai/wp-content/uploads/2024/12/DPS-Reimagining-Military-C2-in-the-Age-of-AI.pdf

- (PDF) Artificial intelligence in the security strategies of the USA and China at the beginning of the 21st century: The struggle for global dominance - ResearchGate, accessed March 17, 2026, https://www.researchgate.net/publication/398716924_Artificial_intelligence_in_the_security_strategies_of_the_USA_and_China_at_the_beginning_of_the_21st_century_The_struggle_for_global_dominance

- Code, Command, and Conflict: Charting the Future of Military AI - Belfer Center, accessed March 17, 2026, https://www.belfercenter.org/research-analysis/code-command-and-conflict-charting-future-military-ai

- GAO-25-106454, DEFENSE COMMAND AND CONTROL: Further Progress Hinges on Establishing a Comprehensive Framework, accessed March 17, 2026, https://www.gao.gov/assets/gao-25-106454.pdf

- DOD maps out plan for new enterprise command-and-control program office, C2 suite, accessed March 17, 2026, https://defensescoop.com/2026/01/06/dod-enterprise-command-and-control-program-office/